Research statement

Research

My research addresses a fundamental tension in computational problem-solving: many of the most important real-world problems — from optimal network design to gene regulatory inference to resource allocation under constraints — are bilevel in structure and combinatorial in nature, making them simultaneously NP-hard and resistant to gradient-based methods. Classical solvers scale poorly; neural methods lack interpretability; neither handles hierarchical decision-making cleanly.

My work develops surrogate-assisted co-evolutionary frameworks that make these problems tractable. The core idea is to replace expensive exact evaluations — which require solving an inner optimisation problem for every outer candidate — with learned Gaussian Process surrogates that predict solution quality at a fraction of the cost, while co-evolutionary pressure maintains population diversity and avoids local optima. This approach has achieved over 96% reduction in computation cost on canonical NP-hard benchmarks (Max-Cut) and extends naturally to biologically meaningful problems like Gene Regulatory Network inference.

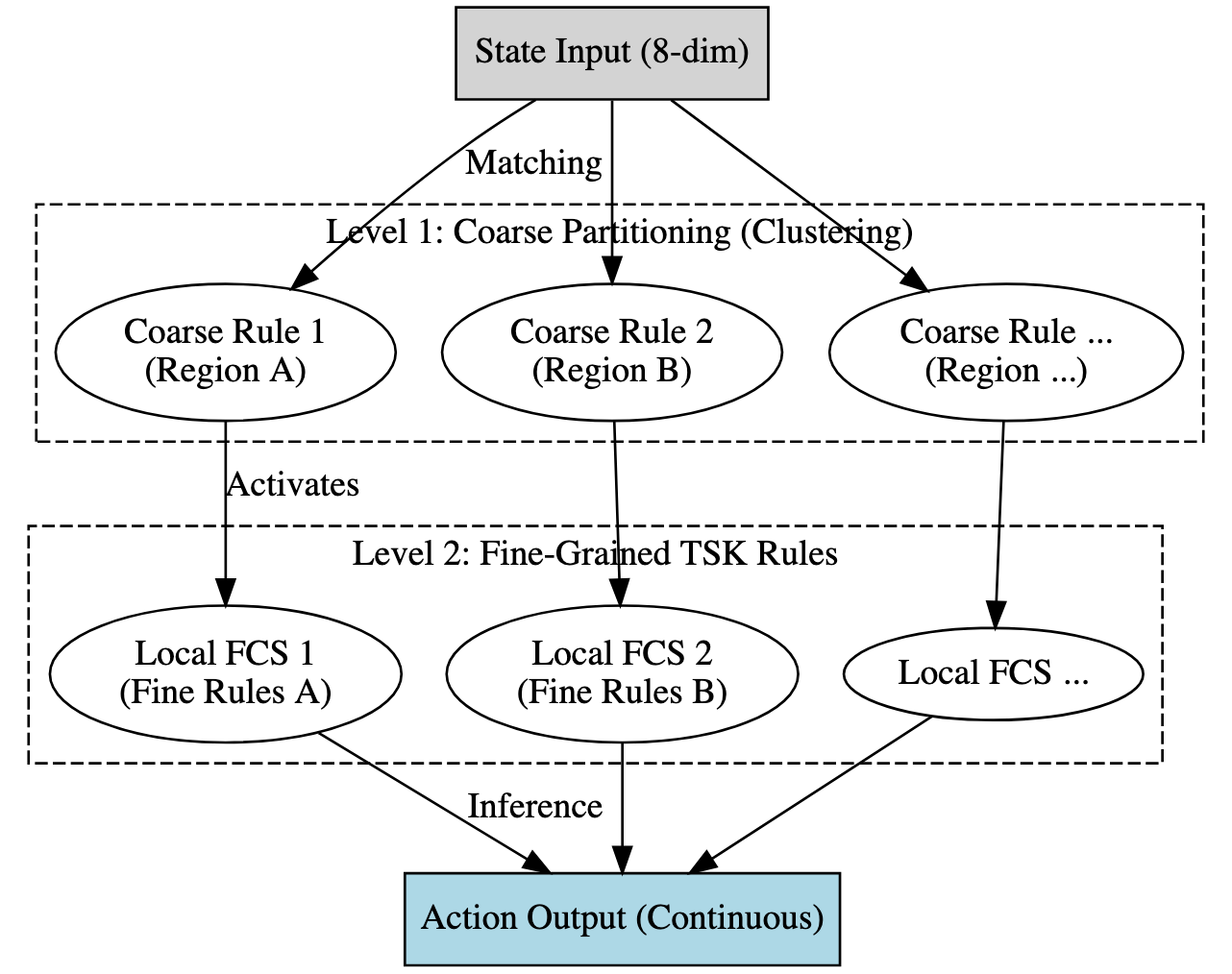

A parallel thread in my research addresses the interpretability of complex learned policies. Reinforcement learning agents can solve continuous control tasks with superhuman performance, yet their decision logic remains opaque — a critical barrier for deployment in safety-certified systems. I develop frameworks that distil deep RL policies into compact Takagi–Sugeno–Kang fuzzy rule bases, producing human-readable IF-THEN representations that preserve performance while enabling formal verification.

Going forward, I am extending these methods to temporal influence maximisation on large dynamic networks — where cascade structure evolves over time — and to compression of large language model retrieval pipelines via 1-bit quantisation. The unifying thread is making hard problems tractable without sacrificing the interpretability that makes solutions trustworthy.

PhD Dissertation · Syracuse University · Expected Aug 2026

Bilevel Optimization on Large Scale Non-Convex, Constrained Combinatorial Problems

Advisors: Dr. Chilukuri K. Mohan & Dr. Venkata Gandikota

Peer-reviewed publications

S. Araballi, C. K. Mohan, S. Khan.

Distilling Deep Reinforcement Learning into Interpretable Fuzzy Rules: An Explainable AI Framework

Compressed 26,000+ SAC agent experiences into 74 human-readable IF-THEN rules using Takagi–Sugeno–Kang fuzzy partitioning — enabling safety-certified decisions in continuous control systems.

Research Highlight

S. Araballi, V. Gandikota, P. Sharma, P. Khanduri, C. Mohan.

A Surrogate-Assisted Co-Evolutionary Framework for Bilevel Optimization

A hybrid evolutionary-surrogate framework achieving >96% computation cost reduction on NP-hard bilevel problems. Validated on Max-Cut and extended to Gene Regulatory Networks.

Research Highlight

S. Araballi, Q. Ni, F. Chew, B. Egan, C. Mohan.

Developing an Ad Viewing Retention Model for TV Comedy Through Machine Learning

Random Forest and Neural Network models achieving 80%+ accuracy in predicting TV ad retention from second-by-second ComScore data. Recognised in a ComScore industry press release.

F. Chew, B. Egan, C. Mohan, D. Xu, S. Araballi.

Stay Tuned: Predicting Ad Viewing Retention in TV Programs Using Machine Learning

Identified key attributes predictive of audience retention: ad pod position, program viewing percentage, ad originality. Provided actionable ad scheduling recommendations.

F. Chew, B. Egan, C. Mohan, S. Araballi, D. Xu.

Predicting Effective Ad Curation with Neural Networks and Statistical Analyses to Maximise Audiences During TV Ad Breaks

Combined neural network predictions and statistical analysis to identify optimal ad curation strategies for maximising TV audience retention.

Under review & in preparation

S. Araballi & V. Gandikota.

T-RIS: Temporal Reverse Influence Sampling for Large-Scale Temporal Networks

A scalable influence maximisation algorithm for large temporal networks with time-varying edge weights — addresses limitations of static RIS on dynamic real-world graphs.

S. Araballi, C. Mohan & S. Khan.

Crisp Distance Metrics for Fuzzy Rules

Novel crisp distance metrics for comparing and clustering fuzzy IF-THEN rules — enabling principled pruning of large FuzzyKB systems for XAI applications.

S. Araballi & V. Gandikota.

1-Bit Retrieval-Augmented Generation

1-bit quantisation within RAG pipelines for efficient, low-memory retrieval-augmented generation — bridging neural network compression and LLM inference.